May 31, 2012

Everything is connected

This section of my book on the hunter-gatherer worldview discusses its most important underlying assumption: that everything is connected. At the end of the section, I speculate that the connectivity of this worldview and the intrinsic connectivity of language (through recursion) is not coincidental, but arises from the fact that both conceptual systems emerge from the unique connectivity and patterning instinct of the human prefrontal cortex (pfc).

Everything is connected

Of all the underlying patterns of the hunter-gatherer worldview, there is probably none so pervasive as the implicit belief that all aspects of the world – humans, animals, ancestors, spirits, trees, rocks and rivers – are interrelated parts of a dynamic, integrated whole. In the words of one anthropologist, “hunter-gatherers think about the world in a highly integrated fashion, with an interpenetration of the natural and social in a single integrated environment, and an ideology encompassing humans, animals, and plants in a living nature.”[1] The natural environment is, for hunter-gatherers, most definitely alive. Anthropologist Richard Nelson writes evocatively about the sentient natural world perceived by the Koyukon people of Alaska’s boreal forest:

Traditional Koyukon people live in a world that watches, in a forest of eyes. A person moving through nature – however wild, remote, even desolate the place may be – is never truly alone. The surroundings are aware, sensate, personified. They feel. They can be offended. And they must, at every moment, be treated with proper respect. All things in nature have a special kind of life… All that exists in nature is imbued with awareness and power; all events in nature are potentially manifestations of this power; all actions toward nature are mediated by consideration of its consciousness and sensitivity.[2]

This sentience of nature is frequently manifested in the spirits that are perceived to exist all around. To call these spirits “supernatural” would be a serious misconception, applying another modern viewpoint anachronistically to the hunter-gatherer cosmology.

These spirits are an integral part of the natural world just as much as humans and other animals. As Canadian anthropologist Diamond Jenness wrote about the spirits of the Ojibwa (Chippewa) Indians, “they are a part of the natural order of the universe no less than man himself, whom they resemble in the possession of intelligence and emotions. Like man, too, they are male or female, and in some cases at least may even have families of their own. Some are tied down to definite localities, some move from place to place at will; some are friendly to Indians, other hostile.”[3]

Just as the spirits are integrally connected to the natural world, so those aspects of life that we define as “religion” permeate all the normal, daily activities of the hunter-gatherer. As Wright puts it, “one of the more ironic properties of hunter-gatherer religion: it doesn’t exist. That is, if you asked hunter-gatherers what their religion is, they wouldn’t know what you were talking about. The kinds of beliefs and rituals we label ‘religious’ are so tightly interwoven into their everyday thought and action that they don’t have a word for them.”[4]

One forager tradition that powerfully demonstrates the interconnectedness of each of the dimensions of life that we tend to keep separate is the Aboriginal Dreamtime. As described by researcher Deborah Bird Rose, “in Aboriginal Australia, the living world is a created world, brought into being as a world of form, difference, and connection by creative beings called Dreamings.”[5] Rose goes on to describe the Aboriginal creation myth of the Dreamtime:

The Australian continent is crisscrossed with the tracks of the Dreamings: walking, slithering, crawling, flying, chasing, hunting, weeping, dying, birthing. They were performing rituals, distributing the plants, making the landforms and water, establishing things in their own places, making the relationships between one place and another. They left parts of themselves, looked back and looked ahead, and still traveled, changing languages, changing songs, changing skin. They were changing shape from animal to human and back to animal and human again, becoming ancestral to particular animals and humans. Through their creative actions they demarcated a world of difference and of relationships that crosscut difference.[6]

Through the Dreamtime, Australian Aboriginals integrate not only the human, natural and spiritual domains, but also the past, present and future. We are used to creation myths describing events that occurred long ago, but for the Aboriginals the Dreamtime exists in the present as much as in the past.

In the words of one observer, “it exists as a kind of metaphysical now, … a spiritual yet nonetheless real dimension of time and space somehow interpenetrating and concurrent with our own.”[7] The creative ancestors may connect with humans in dreams, but also in other ways. For example, in a phenomenon known as “conception Dreaming,” it’s believed that the spirit of the place where a woman initially conceives a baby enters the fetus there, and remains a part of the infant when she’s born.[8] As Rose describes it, there is “thus a web of relatedness in which everything is connected to something that is connected to something, and so forth.”[9] Here is the same idea expressed in the more concrete words of an elderly aboriginal lady, Daisy Utemorrah:

All these things, the plants and the trees, the mountains and the hills and the stars and the clouds, we represent them. You see these trees over there? We represent them. I might represent that tree there. Might be my name there, in that tree. Yes, and the reeds, too, in the waters… the frogs and the tadpoles and the fish… even the crickets… all kinds of things… we represent them.[10]

This integration of meaning, where “nothing is without connection,”[11] is intriguingly reminiscent of the inherent structure of language as described earlier.[12] In language, the power of recursion can be viewed as a “magical weave,” permitting the connection of previously separate modules of the brain in order to create new meaning. Just as in language a symbolic network permits emergent meaning to arise from its connectivity, so in the hunter-gatherer worldview, all aspects of life participate in a conceptual system which “links species, places, and regions, and leaves no region, place, species, or individual standing outside creation, life processes, and responsibilities.”[13] I suggest that this similarity between the structure of language and the forager worldview is not coincidental, but rather arises from the fact that both conceptual systems are created by the innate connectivity and patterning instinct of the pfc.

[1] Winkelman, M. (2002). “Shamanism and Cognitive Evolution.” Cambridge Archaeological Journal, 12(1), 71-101.

[2] Nelson, R. K. (2002). “The watchful world”, in G. Harvey, (ed.), Readings in Indigenous Religions. New York: Continuum, pp. 343-364.

[3] Cited in Lévi-Strauss, C. (1966). The Savage Mind, Chicago: The University of Chicago Press.

[4] Wright (2009) op. cit., 19-20.

[5] Rose, D. B. (2002). “Sacred site, ancestral clearing, and environmental ethics”, in G. Harvey, (ed.), Readings in Indigenous Religions. New York: Continuum, pp. 319-342.

[6] Ibid.

[7] Arden, H. (1994). Dreamkeepers: A Spirit-Journey into Aboriginal Australia, New York: HarperCollins, 3-4.

[8] Rose, D. B. (1996). Nourishing Terrains: Australian Aboriginal Views of Landscape and Wilderness, Canberra: Australian Heritage Commission, 39-40.

[9] Rose (2002) op. cit.

[10] Arden (1994) op. cit., 23.

[11] Rose (2002) op. cit.

[12] See Chapter 3, “What’s special about language?”, page 29.

[13] Ibid.

October 31, 2010

Out of Africa

This section of my book, Finding the Li: Towards a Democracy of Consciousness, covers the exodus of modern humans from Africa, and describes what happened when they met the Neanderthals in Europe. It’s taken from the chapter “The Rise of Mythic Consciousness.” The section begins by answering the question posed at the end of the previous section, called the “sapient paradox”: if modern humans evolved over 150,000 years, why did it take until 40,000 years ago for human to show symbolic behavior in the Upper Paleolithic revolution?

Out of Africa

Well actually, according to a growing number of experts, it did happen sooner. A lot sooner. In fact, there’s evidence that the beginnings of cultural modernity may have occurred at least seventy-five thousand years ago. It’s just that it wasn’t in Europe that these stirrings of modernity first showed up, but in South Africa. In recent years, excavations at two important sites on the coastline of South Africa – Howieson’s Poort and Blombos Cave – have uncovered startling new evidence of symbolic behavior by our human ancestors a full thirty-five thousand years before the Upper Paleolithic revolution in Europe. Some of the findings include engraved ostrich eggshells and perforated shells that were probably used as personal ornaments, but the most striking treasure unearthed to date has been one particular piece of ochre with a series of complex cross-hatched lines engraved into it.[1] [Figure 3.] These lines, in the view of archaeologists Renfrew and Mellars “seem certainly to be deliberate patterning” and represent “the earliest unambiguous forms of abstract ‘art’ so far recorded,” and, along with the other findings, suggest that “the human revolution developed first in Africa … between 150,000 and 70,000 years ago.”[2] In fact, some additional engraved pieces have been found that are even older, leading Mellars to assert that “there is now no question that explicitly symbolic behavior was taking place by 100,000 years ago or earlier.”[3]*

If our ancestors were thinking symbolically and behaving like modern humans a hundred thousand years ago, then what about the Upper Paleolithic revolution and the Great Leap Forward? Doesn’t it perhaps begin to seem like a series of tentative steps rather than a great leap? Certainly some observers think so. Two archaeologists, Sally McBrearty and Alison Brooks, have caused a stir with an article entitled “The revolution that wasn’t: a new interpretation of the origin of modern human behavior,” arguing exactly this point.[4] And even the momentous findings in Blombos and Howieson’s Poort seem to peter out of the archaeological record after that, suggesting “intermittent” advances in modernity rather than one sweeping tidal wave of progress.[5] Mellars describes the process as possibly “a gradual working out of these new cognitive capacities” of our human ancestors “under the stimulus of various kinds of environmental, demographic, or social pressures.”[6]

But if the excitement of the Great Leap Forward is somewhat diminished, another epic story, perhaps grander than any other, has come into the foreground. It’s a story that’s emerged through advances in mitochondrial DNA analysis, through which scientists can trace the patterns of previous molecular changes in the DNA of modern humans and thus establish accurate time estimates regarding the migrations of different human groups. The story can only be called “Out of Africa” and it goes something like this. At some time around sixty to eighty thousand years ago, a certain lineage of humans (known as L3 based on their mitochondrial DNA type) began to expand throughout Africa, becoming the majority population throughout the continent with the exception of the Khoisan (Bushmen) and the Biaka (Pygmies). One group of this L3 lineage got as far north as Ethiopia and from this group a small initial contingent, no more than a few hundred people at most, migrated across the mouth of the Red Sea, through Arabia and eastward along southern Asia until reaching Australia. This epic journey happened sometime during the period between fifty to sixty-five thousand years ago. A some point during this migration, another group headed north into western or central Asia, and from there arrived in Europe, where their descendants eventually instigated the Upper Paleolithic “revolution.” A couple of startling facts arise from this story. The first is that all non-African people currently alive today are descendants of this very small group of several hundred that made its way across the Red Sea. Secondly, because of this, there is a far wider genetic diversity between different African populations than between all other non-African people on the planet. [7]

It’s a grand story, but it still raises as many questions as it answers. What led to the original expansion of the L3 group through Africa? And how does that tie in with the findings at Blombos Cave? And we still have the “sapient paradox” to contend with: if humans were acting so modern all this time, why is there nothing special to show for it in the archaeological record other than some pierced shells and cross-hatched ochre until the flowering of achievements in Europe forty thousand years ago?

Archaeologist Richard Klein believes that the answer to the first set of questions may be genetic. In his view, a genetic mutation, most likely in the “neural capacity for language or for ‘symboling’,” is the best explanation for the dramatic changes that ensued. Here’s how he argues his case:

When the full sweep of human evolution is considered, it is surely reasonable to propose that the shift to a fully modern behavioral mode and the geographic expansion of modern humans were also coproducts of a selectively advantageous genetic mutation. Arguably, this was the most significant mutation in the human evolutionary series, for it produced an organism that could alter its behavior radically without any change in its anatomy and that could cumulate and transmit the alterations at a speed that anatomical innovation could never match. As a result, the archeological record changed more in a few millennia after 40 ky ago than it had in the prior million years.[8]

There are, however, other explanations for the dramatic transformation in human behavior which don’t require a genetic mutation to happen just at the right time. Mellars has suggested that a positive feedback loop may have begun with the more efficient hunting weapons that the Blombos and Howieson’s Poort groups would have been capable of constructing. Increased hunting efficiency, along with expanded trading and exchange networks between different groups, may have led to a sustained growth in population. In fact, the mitochondrial DNA analysis does suggest rapid population growth between sixty and eighty thousand years ago.[9] Another group of archaeologists has produced mathematical studies showing that once a certain demographic critical size is reached, there is a greater impetus for more innovation and, perhaps most importantly, these innovations are more likely to be copied by other communities, creating a “cultural ratchet effect.”[10]

Either a genetic mutation or the positive feedback loop from denser populations could explain the successful migration out of Africa. But neither of these are sufficient to explain the Upper Paleolithic revolution. The population densities in Europe were no greater than those in Africa, and the people who made it to Europe were genetically no different than the rest of the L3 group. So how might we explain that explosion in symbolic behavior and thus resolve the “sapient paradox”? An important clue might be found in examining what these L3 humans encountered when they arrived in Europe.

When our human ancestors first showed up in Europe, they weren’t the only ones around. The continent was already populated by Neanderthals, close cousins of homo sapiens who had diverged genetically only a few hundred thousand years earlier.[11]* The Neanderthals had withstood more than two hundred thousand years of climatic variations in the cold reaches of Ice Age Europe, and with their heavy-set bodies they would have seemed better equipped than the homo sapiens arriving from Africa to handle Europe’s Ice Age climate. But within ten thousand years of the arrival of homo sapiens on the scene, the Neanderthals were extinct.

To many anthropologists, the evidence seems cut and dried: the Neanderthals were outcompeted by their cognitively superior cousins. They were “driven to extinction” by the homo sapiens invaders simply because they were “unable to compete for resources.” They “perceived and related to the environment around them very differently” than our human ancestors and, as a result, “wielded culture less effectively.” There’s even been mention of a “Pleistocene holocaust” prompting some observers to look at our more recent historical record and note acerbically that “homo sapiens has not been notable for a tolerance of differences or a drive toward coexistence with differing cultures – to say nothing of competing species.”[12]

Other archaeologists have, however, argued that the situation was not so simple. In fact, they claim, the Neanderthals showed evidence of symbolic behavior just as sophisticated as that of their homo sapiens competitors. Traditionally, when bone tools and ornaments were dug up from Neanderthal sites, they were dismissed by arguments that the Neanderthals were just mimicking the homo sapiens invaders without understanding the true meanings of these things. But recently, the same kind of ornaments have been discovered that date back to fifty thousand years ago, or ten thousand years before modern humans came on the scene, offering unequivocal evidence of Neanderthal symbolic thought.[13] So what should we make of that?

A possible resolution to this debate arises if we go back and consider the three stages of language evolution posited in the previous chapter.[14] Under that hypothesis, the hominids living around three hundred thousand years ago had reached the second stage of language evolution, with a protolanguage that accompanied the stone-working complexity known as Levallois technology (which is associated with the Neanderthals). Possibly, the Neanderthals had reached that level of cognitive sophistication, but were unable to make the leap across the metaphoric threshold to modern language. It’s easy to imagine how a group that could say to each other “fire stone hot” would be outcompeted by another group that could say “I put the stone that you gave me in the fire and now it’s hot.” A recent paper by Coolidge and Wynn speculating on the Neanderthal mind is consistent with this hypothesis, proposing that homo sapiens had greater “syntactical complexity” than the Neanderthals, including the use of subjunctive and future tenses, and that this enhanced use of language may have given modern humans “their ultimate selective advantage over Neandertals.”[15]

However, the competition between homo sapiens and Neanderthals was probably fierce and most likely endured for thousands of years. In fact, it’s this very competition that might have been the catalyst for the dramatic achievements of the Upper Paleolithic revolution, thus providing a possible solution to the “sapient paradox.” As we know from modern history, warfare is frequently the grim handmaiden of major technological innovations, and it’s reasonable to believe that the same could have been true of that much earlier conflict. Conard, for example, has raised the possibility that “processes such as competition at the frontiers between modern and archaic humans contributed to the development of symbolically mediated life as we know it today.”[16]*

There’s more at stake in the possible distinction between Neanderthal and modern human cognition than just a forensic post mortem of how the Neanderthals became extinct. As we’ll see in the next section, this distinction may help us to understand the underlying sources of the mythic consciousness that became the hallmark of everything accomplished by homo sapiens from that time on.

[1] Henshilwood, C. S., d’Errico, F., Vanhaeren, M., van Niekert, K., and Jacobs, Z. (2004). “Middle Stone Age Shell Beads from South Africa.” Science, 304, 404; Henshilwood, C. S., and Marean, C. W. (2003). “The Origin of Modern Human Behavior: Critique of the Models and Their Test Implications.” Current Anthropology. City, pp. 627-651; Henshilwood, C. S. et al. (2002). “Emergence of Modern Human Behavior: Middle Stone Age Engravings from South Africa.” Science, 295, 1278-80.

[2] Renfrew, op. cit.; Mellars, P. (2006). “Why did modern human populations disperse from Africa ca. 60,000 years ago? A new model.” PNAS, 103(June 20, 2006), 9381-9386.

[3] Mellars (2006) op. cit. Notably, the program director at Blombos, Christopher Henshilwood, sees these findings as evidence that, “at least in southern Africa, Homo sapiens was behaviorally modern about 77,000 years ago,” and other archaeologists who were initially skeptical of these claims are increasingly coming around, acknowledging that “the new material removes any doubt whatsoever.” See Heshilwood (2002) op. cit., and Balter, M. (2009). “Early Start for Human Art? Ochre May Revise Timeline.” Science, 323(30 January 2009), 569, quoting archaeologist Paul Pettitt.

[4] McBrearty, S., and Brooks, A. S. (2000). “The revolution that wasn’t: a new interpretation of the origin of modern human behavior.” Journal of Human Evolution, 39(2000), 453-563.

[5] Powell, A., Shennan, S., and Thomas, M. G. (2009). “Late Pleistocene Demography and the Appearance of Modern Human Behavior.” Science, 324(5 June 2009), 1298-1301.

[6] Mellars (2006) op. cit.

[7] Forster, P. (2004). “Ice Ages and the mitochondrial DNA chronology of human dispersals: a review.” Phil. Trans. R. Soc. Lond. B, 359(2004), 255-264; Mellars (2006) op. cit.

[8] Klein, R. G. (2000). “Archeology and the Evolution of Human Behavior.” Evolutionary Anthropology, 9(1), 17-36.

[9] Mellars (2006) op. cit.

[10] Powell, A., Shennan, S., and Thomas, M. G. (2009). “Late Pleistocene Demography and the Appearance of Modern Human Behavior.” Science, 324(5 June 2009), 1298-1301; Culotta, E. (2010). “Did Modern Humans Get Smart Or Just Get Together?” Science, 328, 164.

[11] The Neanderthals and other hominids (for example, homo erectus) had already colonized southern Asia and Europe beginning over a million years ago. See Mithen, S. (1996). The Prehistory of the Mind, London: Thames & Hudson, 29; Forster, P. (2004) op. cit.

[12] Quotations taken, in order, from the following sources: Forster, P. (2004) op. cit.; Mithen, S. (2006). The Singing Neanderthals: The Origins of Music, Language, Mind, and Body, Cambridge, Mass.: Harvard University Press; Tattersall, I. (2008). “An Evolutionary Framework for the Acquisition of Symbolic Cognition by Homo sapiens.” Comparative Cognition & Behavior Reviews, 3, 99-114; Klein, R. G. (2003). “Whither the Neanderthals?” Science, 299, 1525-1527; Proctor, R. N. (2003). “The Roots of Human Recency: Molecular Anthropology, the Refigured Acheulean, and the UNESCO Response to Auschwitz.” Current Anthropology, 44(2: April 2003), 213-239; Ehrlich, P. R. (2002). Human Natures: Genes, Cultures, and the Human Prospect, New York: Penguin. See also Mellars, P. (2005). “The Impossible Coincidence. A Single-Species Model for the Origins of Modern Human Behavior in Europe.” Evolutionary Anthropology, 14(1), 12-27 for a valuable discussion on the topic.

[13] Bahn, P. G. (1998). “Neanderthals emancipated.” Nature, 394(20 August 1998), 719-721; Zilhao, J. (2010). “Symbolic use of marine shells and mineral pigments by Iberian Neandertals.” PNAS, 107(3), 1023-1028; d’Errico, F., Zilhao, J., Julien, M., Baffier, D., and Pelegrin, J. (1998). “Neanderthal Acculturation in Western Europe? A Critical Review of the Evidence and Its Interpretation.” Current Anthropology, 39(Supplement), S1-S43.

[14] See page 41.

[15] Wynn, T., and Coolidge, F. L. (2004). “The expert Neandertal mind.” Journal of Human Evolution, 46(4), 467-487.

[16] Conard (2010) op. cit. This viewpoint is also argued by David Lewis-Williams who writes: “It was not cooperation but social competition and tension that triggered an ever-widening spiral of social, political and technological change that continued long after the last Neanderthal had died, indeed throughout human history.” See Lewis-Williams, D. (2002). The Mind In the Cave, London: Thames & Hudson, 96.

October 12, 2010

The Magical Weave of Language

Here’s a pdf version of the chapter on the emergence of language from my book, Towards a Democracy of Consciousness.

The chapter’s called “The Magical Weave of Language.” It examines what makes language so special as a defining characteristic of humanity, and sees the crucial neuroanatomical source of language as the prefrontal cortex. It explores theories of language evolution, from the “gradual and early” school to the “sudden and recent” school. It goes on to refute Steven Pinker’s concept of the “language instinct,” arguing instead that we humans have a deeper “patterning instinct” which gets applied to language from the earliest months of infancy. The chapter proposes that there were, in fact, three phases of language evolution, with the most recent phase of modern language involving a crossing of the “metaphoric threshold,” which allowed for the emergence of a “mythic consciousness”. This permitted humans to think for the first time in terms of abstract concepts and begin the never-ending search for meaning in our lives.

September 27, 2010

Is the “language instinct” really a “patterning instinct”?

Steven Pinker’s theory of a “language instinct” has become highly influential in the last 15 years. But I argue in this section of my book, Finding the Li: Towards a Democracy of Consciousness, that it may be something more fundamental – a “patterning instinct” – that enables humans to learn languages so easily. This approach is supported by a barrage of recent criticisms of Pinker’s and Chomsky’s idea of a “universal grammar” innate in a human being.

The “language instinct”

“The language instinct” is, in fact, the name of a popular book published in 1994 by renowned cognitive scientist Steven Pinker. Pinker’s title says it all, and he makes no bones about his position in the language debate. “Language is not a cultural artifact that we learn the way we learn to tell time or how the federal government works,” he writes. “Instead, it is a distinct piece of the biological makeup of our brains.” He goes on to explain why he uses “the quaint term ‘instinct.’ It conveys the idea that people know how to talk in more or less the sense that spiders know how to spin webs.” As if to draw a line in the sand of linguistic debate, Pinker makes himself even more clear: “Language is no more a cultural invention than is upright posture. It is not a manifestation of a general capacity to use symbols.”[1]

Pinker sees himself as following in a widely respected philosophical tradition begun by Noam Chomsky, probably the most famous linguist of the twentieth century, and generally considered to be the father of modern linguistics. Chomsky believes that every human being has an innate knowledge of language, which he calls a “universal grammar.” The differences in languages around the world merely reflect superficial variations in how the universal grammar is interpreted by different cultures. With his penchant for catchy terms, Pinker calls this universal grammar “mentalese,” explaining that knowing a language is simply “knowing how to translate mentalese into strings of words and vice versa. People without language would still have mentalese.”[2]

If language were in fact an instinct, that would surely seem to support the “gradual and early” camp of language evolution, and indeed Pinker makes himself equally clear on this issue, arguing that “there must have been a series of steps leading from no language at all to language as we now find it, each step small enough to have been produced by a random mutation or recombination. Every detail of grammatical competence that we wish to ascribe to selection must have conferred a reproductive advantage on its speakers, and this advantage must be large enough to have become fixed in the ancestral population.”[3]

The combination of Chomsky’s august authority and Pinker’s communicative skills have caused this theory of the origins of language to be widely influential for many years. However, a barrage of criticism has recently been leveled against this theory based ultimately on the tenet that it “ignores the central organizing theory of modern biology and all that has sprung from it.”[4] The gist of the argument against a language instinct is that language is far too intricate and rapidly changing for any combination of genes to have evolved to control for it specifically. It makes much more sense to look for the underlying capabilities that evolved to enable language, rather than to view language itself as the result of evolution. To take a more extreme example for the sake of clarity, if someone argued that there was a “driving instinct” because of the ease with which most people around the world learned to drive a car, we’d want to argue back that we should instead look for the underlying evolved human traits that permitted cars and driving to become ubiquitous, such as our ability to see things far away, to respond quickly to changes in the line of vision, to rapidly assess changes in speed and to employ sophisticated hand/eye/foot coordination. Just as automobiles, roads and freeways took their shape as a result of our human traits and capabilities, so language evolved as a function of what our brains were capable of doing. In the words of one well-regarded team, “language is easy for us to learn and use, not because our brains embody knowledge of language, but because language has adapted to our brains.”[5]*

An important breakthrough in the debate about a language instinct has been offered by researcher Patricia Kuhl, who has carefully studied how infants distinguish between the different sounds they hear when people speak to them.[6] Kuhl has shown that long before infants have any idea that such a thing as language exists, they are already able to distinguish the different sounds, or “phonetic units,” that make up human speech. What’s fascinating is that an infant, in her first six months, will discriminate between all kinds of phonetic units, regardless of the language used. However, at nine months, she’s already more interested in the phonetic units of her particular language. So, for example, “American infants listen longer to English words, whereas Dutch infants show a listening preference for Dutch words.” By twelve months, the infant has learned to ignore phonetic units that don’t exist in her own language, and can “no longer discriminate non-native phonetic contrasts.” The likely reason for this is that right from the beginning, an infant’s mind uses a kind of “statistical inferencing” process[7], looking for patterns in the sounds she hears, and locking into the more frequent sound patterns. As time goes on, the infant gets increasingly adept at distinguishing the sound patterns of her own language and begins ignoring those that don’t fit into the patterns she’s already identified. On the basis of these findings, we can perhaps say that humans possess a “patterning instinct” rather than a language instinct. Because all infants grow up in societies where language is spoken, this underlying patterning instinct locks into the patterns of language; and it’s this second-order application of the “patterning instinct” that Chomsky and Pinker have seen as a “language instinct.”

But Kuhl’s research demonstrates something even more far-reaching in its implications than a resolution of this particular language debate. It shows, in her words, that “language experience warps perception.” By a very early age, the infant’s brain has literally been shaped by the language that she hears around her, causing her to notice some distinctions in sounds and to ignore others. This early shaping quickly hardens, like a plastic molding, for the rest of her life. As an example of this, Kuhl describes the inability of monolingual Japanese speakers to distinguish between the sounds /r/ and /l/, even though to a Western speaker this distinction seems obvious. Japanese listeners “hear one category of sounds, not two.” These results suggest to Kuhl that “linguistic experience produces mental maps for speech that differ substantially for speakers of different languages.”[8]

If we do, indeed, have a patterning instinct, and if the patterns of the sounds we hear as infants affect the sound patterns we hear for the rest of our lives, then what does that mean for the other kinds of patterns in language? After all, language is not just about sounds. It’s also about symbols and meaning. Is it possible, then, that language shapes our perception, not just of the sounds we hear, but of the very symbols we perceive as having meaning? If this is the case, it implies that, on an evolutionary timescale, language may perhaps have been instrumental in shaping how we think, perhaps even how the connections within our pfc evolved.

[1] Pinker, S. (1994). The Language Instinct: How the Mind Creates Language, New York: HarperPerennial, 4-5.

[2] Ibid., 44-73.

[3] Pinker, S. and Bloom, P. (1990). “Natural Language and Natural Selection.” Behavioral and Brain Sciences, 13 (4), 707-784.

[4] Margoliash, D., and Nusbaum, H. C. (2009). “Language: the perspective from organismal biology.” Trends in Cognitive Sciences, 13(12), 505-510.

[5] Christiansen, M. H., and Chater, N. (2008). “Language as shaped by the brain.” Behavioral and Brain Sciences(31 (2008)), 489-558. For other critiques of the theory of a “language instinct” and “universal grammar,” see Evans, N. (2003). “Context, Culture, and Structuration in the Languages of Australia.” Annual Review of Anthropology(32: 2003), 13-40; Chater, N., Reali, F., and Christiansen, M. H. (2009). “Restrictions on biological adaptation in language evolution.” PNAS, 106(4), 1015-1020; Deacon, op. cit., 27; Fauconnier, G., and Turner, M. (2002). The Way We Think: Conceptual Blending and the Mind’s Hidden Complexities, New York: Basic Books, 173; Aboitiz, F., and Garcia, R. V. (1997). “The evolutionary origin of the language areas in the human brain. A neuroanatomical perspective.” Brain Research Reviews, 25, 381-396; and Tomasello, M. (2000). The Cultural Origins of Human Cognition, Cambridge, Mass.: Harvard University Press, 94.

[6] Kuhl, P. K. (2000). “A new view of language acquisition.” PNAS, 97(22), 11850-11857.

[7] Fauconnier & Turner 2002, op. cit., 173.

[8] Kuhl, op. cit.

September 24, 2010

Language evolution: “gradual and early” or “sudden and recent”?

Did language evolve early and gradually in human evolution, or was it a more recent development? This is a major topic of debate among linguists, archeologists and anthropologists, with significant implications for understanding how our minds work. This section of my book, Finding the Li: Towards a Democracy of Consciousness, introduces this debate.

Language evolution: “gradual and early” or “sudden and recent”?

It seems, at first sight, fairly straightforward. If language evolved socially as an increasingly sophisticated substitute for grooming, then it must have happened gradually, and a long time ago. It’s therefore no surprise that Aiello and Dunbar, the grooming theorists, are also proponents of the “gradual and early” emergence of language, arguing that “the evolution of language involved a gradual and continuous transition from non-human primate communication systems,” beginning as far back as two million years ago. They believe that language most likely “crossed the Rubicon” to its modern state about 300,000 years ago, shortly preceding the emergence of anatomically modern humans. By 250,000 years ago, (an era known as the Middle Paleolithic), they believe “groups would have become so large that language with a significant social information content would have been essential.”[1] They are certainly not alone in this view. For example, another well regarded team of archaeologists describes “a sense of continuity, rather than discontinuity, between human and nonhuman primate cognitive and communicative abilities… We infer that some form of language originated early in human evolution, and that language existed in a variety of forms throughout its long evolution.”[2]

So what’s the problem? Well, it’s probably become clear by now that language is a network of symbols, connected together by the magical weave of syntax. If that’s the case, then whoever could produce the symbolic expression of language must have been thinking in a symbolic way, and therefore would likely have produced other material expressions of symbolism. It therefore seems reasonable to expect that language users would have left some trace of symbolic artifacts such as body ornamentations (e.g. pierced and/or painted shells), carvings of figures, cave paintings, sophisticated hunting and trapping tools (e.g. boomerangs, bows, nets, spear throwers), and maybe even musical instruments.

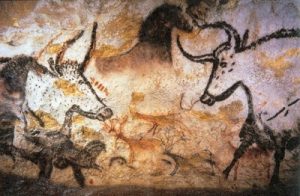

And in fact, the archeological evidence does indeed point to a time when all these clear expressions of symbolic behavior suddenly emerged. There’s just one problem. That time was around thirty to forty thousand years ago in Europe. Most certainly not 250,000 years ago, when Aiello and Dunbar believe that language was “essential.” Here’s how Steve Mithen describes this “creative explosion”:

Art makes a dramatic appearance in the archaeological record. For over 2.5 million years after the first stone tools appear, the closest we get to art are a few scratches on unshaped pieces of bone and stone. It is possible that these scratches have symbolic significance – but this is highly unlikely. They may not even be intentionally made. And then, a mere 30,000 years ago … we find cave paintings in southwest France – paintings that are technically masterful and full of emotive power.[3]

In recent years, as new archeological findings have been unearthed, the timing for what’s known as the “Upper Paleolithic revolution” has been pushed back to around forty to forty-five thousand years ago, but the shift remains as dramatic as ever. We find the “first consistent presence of symbolic behavior, such as abstract and realistic art and body decoration (e.g., threaded shell beads, teeth, ivory, ostrich egg shells, ochre, and tattoo kits),” ritual artifacts and musical instruments.[4] It’s a veritable “crescendo of change.”[5] This revolution of symbols, which has been aptly named by scientist Jared Diamond the “Great Leap Forward,”[6] is so important that we’ll be reviewing it in more detail in the next chapter, but for now we need to focus on its implications for when language first emerged.

As you might expect, those who emphasize the symbolic nature of language are the strongest proponents of the “late and sudden” school of language emergence. The most notable of these is the psychologist/archaeologist team Bill Noble and Iain Davidson, who boldly make their claim as follows:

The late emergence of language in the early part of the Upper Pleistocene accounts for the sharp break in the archaeological record after about 40,000 years ago. This involved … world-wide changes in the technology of stone and especially bone tools, the first well-documented evidence for ritual and disposal of the dead, the emergence of regional variation in style, social differentiation, and the emergence of both fisher-gatherer-hunters and agriculturalists. All these characteristics of modern human behavior can be attributed to the greater information flow, planning depth and conceptualization consequent upon the emergence of language.

Noble and Davidson don’t actually claim that language use began forty thousand years ago. They point out that the first human colonization of Australia occurred about twenty thousand years earlier than that, and they believe this huge feat required the sophistication arising from language. On account of this, they’re willing to push back their date of language emergence, concluding that “sometime between about 100,000 and 70,000 years before the present the behaviour emerged which has become identified as linguistic.” Still, a lot later than Aiello and Dunbar’s 250,000 years ago.

The disagreement is not just a matter of timing. It’s also about the way in which language arose. Noble and Davidson believe that, because of the symbolic nature of language, you can no more have a “half-language” than you can be half-pregnant. “Our criterion for symbol-based communication,” they state, “is ‘all-or-none.’… As with the notion of something having, or not having, ‘meaning’, symbols are either present or absent, they cannot be halfway there.” They are joined in this view by Fauconnier and Turner, the team that described the “double scope conceptual blending” characteristic of language. The appearance of language, they write, is “a discontinuity…, a singularity much like the rapid crystallization that occurs when a dust speck is dropped into a supersaturated solution.”[7] The logic is powerful. Once a group of humans realizes that one symbol (i.e. a word) can relate to another symbol through syntax, then the sky’s the limit. Any word can work. All you need is the underlying set of neural connections to make the realization in the first place, a community that stumbles upon this miraculous power, and then it’s all over. The symbols weave themselves into language, which then reinforces other symbolic networks such as art, religion and tool use. “Language assisted social interaction, social interaction assisted the cultural development of language, and language assisted the elaboration of tool use… all intertwined.”[8]

Archaeologist Richard Klein suggests a genetic mutation may have caused the emergence of modern language

Another celebrated archaeologist, Richard Klein, points out the difference in the sheer complexity of life from the Middle Paleolithic era to the Upper Paleolithic revolution. The artifacts of the Middle Paleolithic were “remarkably homogeneous and invariant over vast areas and long time spans. Their tools, camp sites and graves were all “remarkably simple.” By contrast, Upper Paleolithic remains are far more complex, implying “ritual or ceremony.” For Klein, the difference is so dramatic that he thinks it could be best explained by a “selectively advantageous genetic mutation” that was, “arguably… the most significant mutation in the human evolutionary series.” What kind of mutation would this have been? “It is especially tempting to conclude,” writes Klein, “that the change was in the neural capacity for language or for ‘symboling.'” Another team of archaeologists gets even more specific, proposing that “a genetic mutation affected neural networks in the prefrontal cortex approximately 60,000 to 130,000 years ago.”[9]

It’s a powerful argument. And one that seems incompatible with the “gradual and early” camp. How should we make sense of it? Perhaps there’s another way to approach the problem. At the beginning of the chapter, I mentioned another raging debate over language: whether or not there’s a “language instinct.” Surely this would help resolve the issue? After all, if there is a language instinct, then you’d think it would be embedded so deep in the human psyche that we must have been talking to each other at least a few hundred thousand years ago. So let’s see what light this other debate sheds on the problem.

[1] Aiello & Dunbar, op. cit.

[2] McBrearty, S., and Brooks, A. S. (2000). “The revolution that wasn’t: a new interpretation of the origin of modern human behavior.” Journal of Human Evolution, 39(2000), 453-563.

[3] Quoted in Fauconnier & Turner, op. cit., 183.

[4] Powell, A., Shennan, S., and Thomas, M. G. (2009). “Late Pleistocene Demography and the Appearance of Modern Human Behavior.” Science, 324(5 June 2009), 1298-1301.

[5] Hauser, M. D. (2009). “The possibility of impossible cultures.” Nature, 460(9 July 2009), 190-196.

[6] Diamond, J. (1993). The Third Chimpanzee: The Evolution and Future of the Human Animal, New York: Harper Perennial.

[7] Fauconnier, G., and Turner, M. (2002). The Way We Think: Conceptual Blending and the Mind’s Hidden Complexities, New York: Basic Books, 183.

[8] Ibid.

[9] Coolidge, F. L., and Wynn, T. (2005). “Working Memory, its Executive Functions, and the Emergence of Modern Thinking.” Cambridge Archaeological Journal, 15(1), 5-26.

August 30, 2010

The neuroanatomy of language

What parts of the brain are responsible for language? Most people up to speed on the subject would argue for Broca’s area and Wernicke’s area. But it’s really the prefrontal cortex and its symbolizing capability that’s responsible for our language capability. Here’s a section of my book draft, Finding the Li: Towards a Democracy of Consciousness, that explains in more detail.

The neuroanatomy of language

Considering the crucial importance of the pfc in enabling symbolic thought, it has been relatively ignored until recently as a major anatomical component of our capability for language. Traditionally, when researchers studied the anatomical evolution of language, they focused attention not just on the brain’s capacity but also on our descended larynx, which was thought to be a unique feature of the human vocal tract. However, recent studies have shown that a number of other species, including dogs barking, lower their larynx during vocalization, and some mammals even have a permanently descended larynx. An even more powerful argument against the descended larynx as a prerequisite of language is that infants born deaf can learn American Sign Language with as much speed and fluency as hearing children learn spoken language. There’s seems little doubt that the human larynx co-evolved with our language capacity to enable our fine, subtle distinctions in speech sounds, but it doesn’t seem to have been required for language development.[1] In the words of Merlin Donald, “it is the brain, not the vocal cords, that matters most.”[2]

Even within the brain itself, the pfc hasn’t had much press in relation to language. In the late nineteenth century, two European physicians named Paul Broca and Carl Wernicke focused attention on two different regions in the left hemisphere of the cerebral cortex – now named appropriately enough Broca’s area and Wernicke’s area – as the parts of the brain that control language. They made their discoveries primarily through observing patients who had suffered physical damage to their brains in these regions and had lost their ability to speak normally (known as aphasia.) For over a hundred years, it has become generally accepted that these two areas are the “language centers” of the brain.[3] Equally importantly, both of these areas were noticed to be on the left side of the brain, and in recent decades neuroanatomical research has shown that the left hemisphere is generally the one most used for sequential processing, for creating “a narrative and explanation for our actions,” for acting as our “interpreter.”[4]

However, although Broca’s and Wernicke’s areas have long been viewed as unique to humans, recent research has shown them also to be active in other primates. In one study, for example, the brains of three chimpanzees were scanned as they gestured and called to a person requesting food that was out their reach. As they did so, the chimps showed activation in the brain region that corresponds to Broca’s area in humans.[5] Terrence Deacon believes that, rather than view these areas as “language centers” controlling our ability to speak, we should rather think of language as using a network of different processes in the brain. Broca’s area is adjacent to the part of the brain that controls our mouth, tongue and larynx; and Wernicke’s area is adjacent to our auditory cortex. Therefore, these areas likely evolved as key nodes in the language network of the brain, which would explain the aphasia resulting from damage to them. “Broca’s and Wernicke’s areas,” Deacon explains, “represent what might be visualized as bottlenecks for information flow during language processing; weak links in a chain of processes.”[6] Neuroscientist Jean-Pierre Changeux agrees, arguing that “efficient communication of contextualized knowledge involves the concerted activity of many more cortical areas than the ‘language areas’ identified by Broca and Wernicke.”[7]

Deacon also warns against reading too much into left hemisphere specialization, known as lateralization. He sees lateralization as “probably a consequence and not a cause or even precondition for language evolution,” pointing out that several other mammals, including other primates, also show lateralization, and that even in humans, nearly 10 percent of people are “not left-lateralized in this way.” Lateralization, in his view, “is more an adaptation of the brain to language than an adaptation of the brain for language.”[8]

So, if it’s not the larynx, not Broca’s and Wernicke’s areas, and not lateralization, is there anything about the human anatomy that makes it uniquely capable of creating language? No prizes for guessing that the answer may be the pfc. As Deacon puts it, “two of the most central features of the human language adaptation” are “the ability to speak and the ability to learn symbolic associations.”[9] We’ve already noted that skilled vocalizations are a helpful, but not a necessary, part of our language capability. So that leaves “the symbol-learning problem,” which “can be traced to the expansion of the prefrontal cortical region, and the preeminence of its projections in competition for synapses throughout the brain.”[10] Changeux once again agrees, noting that “propositions and structured speech can be seen as evolutionary phenomena accompanying the expansion of the prefrontal cortex,”[11] as does celebrated neuroscientist Joaquin Fuster who writes that “given the role of prefrontal networks in cognitive functions, it is reasonable to infer that the development of those networks underlies the development of highly integrative cognitive functions, such as language.”[12]

If the pfc was, in fact, the central driver of the emergence of language, what light (if any) does that shed on those raging debates about when and at what rate language evolved, and whether there is something that can be called a “language instinct”? In order to answer that, we need to understand a little more about the social context in which language emerged.

[1] For a full review of this issue, see Fitch, W. T. (2005). “The evolution of language: a comparative review.” Biology and Philosophy, 20, 193-230.

[2] Donald, M. (1991). Origins of the Modern Mind: Three Stages in the Evolution of Culture and Cognition, Cambridge, Mass.: Harvard University Press, 39.

[3] See Donald op. cit., 45-94, for a full discussion of the history of anatomical theories of human language.

[4] Gazzaniga, M. S. (2009). “Humans: the party animal.” Dædalus(Summer 2009), 21-34.

[5] Taglialatela, J. P., Russell, J. L., Schaeffer, J. A., and Hopkins, W. D. (2008). “Communicative Signaling Activates ‘Broca’s’ Homolog in Chimpanzees.” Current Biology, 18, 343-348.

[6] Deacon, op. cit., 288.

[7] Changeux, J.-P. (2002). The Physiology of Truth: Neuroscience and Human Knowledge, M. B. DeBevoise, translator, Cambridge, Mass.: Harvard University Press, 123.

[8] Deacon, op. cit., 310, 315. Italics in original.

[9] Ibid., 220.

[10] Ibid.

[11] Changeux, op. cit., 123-4.

[12] Fuster, J. M. (2002). “Frontal lobe and cognitive development.” Journal of Neurocytology, 31(December 2002), 373-385.

August 23, 2010

Language: weaving a net of symbols

Here’s the first section of Chapter 3 of my book draft, Finding the Li: Towards a Democracy of Consciousness. This chapter’s about the evolution of language. This first section delves into what’s special about language, contrasting it to the calls of vervet monkeys described by Seyfarth & Cheney. It parses a typical sentence to highlight linguistic features such as “double-scope conceptual blending,” displacement, counterfactuals, and the “magical weave” of syntax.

As always, constructive comments are warmly welcomed.

Weaving a net of symbols.

Given that it’s something every one of us uses every day of our lives, and which has been studied for millennia, it’s amazing how much the experts still disagree about language. For example, consider the question of when language first emerged. Some researchers argue for a long, slow, evolution of language, beginning in the time of our hominid ancestors several million years ago, and gradually developing into what we now think of as modern language. Other experts argue for a much later and more sudden rise of language, perhaps as recently as 40,000 years ago. There’s even more raging disagreement about the relationship of language and our brains. Some famous theorists have proposed that we have a “language instinct,” an innate set of neural pathways that have evolved to comprehend the unique attributes of language such as syntax and grammar. Other researchers argue back that this is impossible, and that what’s innate in our brains is something more fundamental than language itself.

One thing nobody seems to disagree about is the central importance of language to our human experience. “More than any other attribute,” writes one team of biologists, “language is likely to have played a key role in driving genetic and cultural human evolution.”[1] When you consider your daily life, your interactions with your family, your work, even the way you think about things, you quickly realize that language is necessary for virtually everything. In the words of one linguist, “everything you do that makes you human, each one of the countless things you can do that other species can’t, depends crucially on language. Language is what makes us human. Maybe it’s the only thing that makes us human.”[2]

As we’ll see, language is equally important to the rise of the pfc’s power in human consciousness. We’ll explore in this chapter how language first gave the pfc the capability to expand its purview beyond its original biological function. In pursuing this exploration, we’ll find that understanding language in terms of the pfc may help us to untangle some of those debates about language that continue to galvanize the experts, and as we do so, to uncover some insights into the very nature of how we think.

What’s special about language?

First, though, we need to get a handle on what language really is and what’s so special about it. Perhaps a good place to start is what language isn’t. Back in 1980, a team of researchers spent over a year in the Amboseli National Park in Kenya, watching groups of vervet monkeys interact, and recording their vocalizations. What they found made waves in the field of animal communication. The monkeys have three important natural predators: leopards, eagles and pythons, each of which has a different style of attacking them, either jumping at them, attacking from the sky or from the ground. The researchers discovered that the monkeys had developed completely different vocalizations to warn their group of each predator: short tonal calls for leopards, low-pitched staccato grunts for eagles and high-pitched “chutters” for snakes. When the monkeys heard the leopard call, they’d climb up in the trees; an eagle call caused them to look up or run into dense bush; and a snake call had them looking down at the ground around them. The researchers could induce the different behaviors in the monkeys by playing tape recordings of each call. “By giving acoustically distinct alarms to different predators,” they explained, “vervet monkeys effectively categorized other species.” These fascinating findings showed that vervet monkeys were capable of what was described as “perceptual categorization… of rudimentary semantic signals.”[3] It certainly showed how smart vervet monkeys are. But it wasn’t language.

A fundamental characteristic of language is that, in the words of researchers Noble and Davidson, it involves the “symbolic use of communicative signs”.[4] Anthropologist/neuroscientist Deacon agrees with this, suggesting that “when we strip away the complexity, only one significant difference between language and nonlanguage communication remains: the common, everyday miracle of word meaning and reference … which can be termed symbolic reference.”[5] But, an alert reader might ask at this point, wasn’t that what the vervet monkeys were doing? If we consider the definition of “symbol” from the previous chapter, as something that has a purely arbitrary relationship to what it signifies, then the vervet calls seem to meet that definition. It’s only because the other vervet monkeys understand the meaning of the grunts or chutters that they know whether to look up or look down. That may be true, but there’s another aspect of language that sets it apart from the vervet calls: syntax.

“Animal communication is typically non-syntactic, which means that signals refer to whole situations,” explains a team of language researchers. Human language, on the other hand, “is syntactic, and signals consist of discrete components that have their own meaning… The vast expressive power of human language would be impossible without syntax, and the transition from non-syntactic to syntactic communication was an essential step in the evolution of human language.”[6] So, when a vervet monkey gives a low-pitched grunt, he’s not saying the word “eagle.” He’s saying, in one grunt: “There’s an eagle coming, and we’d all better head for the bushes.” If he grunted twice, that wouldn’t mean “two eagles.” And if he gave out a grunt followed by a chutter, that wouldn’t mean “an eagle just attacked a snake.” The vervet monkeys can’t get out of the context of their specific situation. They can’t use syntax to make “infinite use of finite means.”[7]

To fully understand the power of language, consider the following sentence:

You remember that guy from New York we met at the cocktail party the other day, who told us that if the Fed doesn’t ease the money supply, stocks would fall?

It seems like a simple sentence, but there’s a lot going on under the surface. First, let’s begin with the words “cocktail party.” A cocktail refers to a mixed drink. A party refers to a group of people getting together. But we all know that “cocktail party” refers to a specific type of party. It wasn’t necessary for anyone to actually be drinking a cocktail to make it a “cocktail party.” They might have been serving wine and champagne, but we wouldn’t call it a “wine and champagne party.” This crucial element of language takes two completely separate aspects of reality – a mixed drink and a social gathering – and blends them together to create a brand new concept. Cognitive scientists Fauconnier and Turner have aptly called this “conceptual blending” and consider it to be “one (and perhaps the) mental operation whose evolution was crucial for language.”[8]

But the complexity really gets going when we come to phrases like “ease the money supply” and “stocks would fall.” Here, we meet one of the most ubiquitous aspects of modern language, which is the use of tangible metaphors to convey abstract meaning. We’re so comfortable with these metaphors in our daily language that we don’t even consider them as such, but ponder for a moment what it means to “ease the money supply.” There’s an underlying metaphor of some kind of reservoir of liquid, perhaps water, which would normally come flowing out to people. But someone has their hands on a lever of some sort, which keeps the supply controlled. Now, this person – the Fed – wants everyone to have a little more of the liquid, so they ease up on the lever, allowing some more to flow out. Similarly, stocks don’t really fall. People, animals or things might fall, off a table or out of a tree. But of course when something falls, it goes from a high position to a lower position. So, we naturally understand that a falling stock is one whose price is moving from higher to lower. These metaphors are examples of what Fauconnier and Turner see as an advanced form of conceptual blending, which they term “double-scope conceptual blending.” It’s called “double scope” because it integrates “two or more conceptual arrays… which typically conflict in radical ways on vital conceptual relations” – such as in this case stock prices and falling things – into a “novel conceptual array” which “develops emergent structure not found in either of the inputs.”[9]

There’s still more amazing complexity to that simple sentence. Notice that it’s referring to someone we met “the other day.” He’s not there talking to us now. It all happened somewhere else and in the past, but through language we can bring the past back to the present in a matter of seconds, and we can whisk people or things from anywhere in the universe to be present in our minds with just a few words. This near-magical power of language is known as displacement, “the ability to make reference to absent entities.”[10]

The magic of language goes even further than displacement. Consider that we’re being asked to imagine a scenario where stocks would fall if the Fed doesn’t ease the money supply. This is something that hasn’t actually happened. It may never happen. But we can still talk about the scenario with as much ease as if it were happening right now. This ability of language to create hypothetical situations out of thin air is known as a “counterfactual,” a reference to something that’s not a concrete fact but can still exist in our minds and get communicated through language.

There’s already a lot to be impressed about in that one sentence, with its double-scope conceptual blending, its displacements and its counterfactuals. But the coup de grace of this sentence and most other sentences in every language of the world is its syntax. If language is like a net of symbols, we can think of syntax as a magical weave that can link each section of the net to any other section at a moment’s notice. Look at how many miraculous conceptual leaps we make while still holding a meaningful narrative together in our minds. (1) “You remember” (asked in a questioning tone): we’re asked to access our memory; (2) “that guy”: focus on the category of male humans; (3) “from New York”: narrow down that category based on where the person is from; (4) “we met at the cocktail party the other day”: create a mental image of the party; (5) “who told us”: shift from a mere recall of the person to a recollection of the conversation; (6) “if the Fed doesn’t ease the money supply…”: abrupt transition from an image of the cocktail party to a hypothetical financial scenario.

This magical weave that we pull off incessantly every day without even being aware of it is known as “recursion,” and is viewed as the most powerful and characteristic feature of modern language, accomplished by the proper placement and linkage of multiple concepts through the syntax of the sentence. Humans alone took “the power of recursion to create an open-ended and limitless system of communication,” writes a team of linguistic experts, who propose that this power was perhaps “a consequence (by-product)” of some kind of “neural reorganization” that arose from evolutionary pressure on humans, causing previously separate modular aspects of the brain to connect together and create new meaning.[11]* As we already know from the previous chapter, there’s one part of the brain that’s uniquely connected to permit this cognitive fluidity that underlies our human capabilities: the pfc.

[1] Szathmary, E., and Szamado, S. (2008). “Language: a social history of words.” Nature, 456(6 November, 2008), 40-41.

[2] Bickerton, D. (2009). Adam’s Tongue: How Humans Made Language, How Language Made Humans, New York: Hill and Wang, 4.

[3] Seyfarth, R. M., Cheney, D. L., and Marler, P. (1980). “Monkey Responses to Thee Different Alarm Calls: Evidence of Predator Classification and Semantic Communication.” Science, 210(November 14, 1980), 801-803.

[4] Noble, W., and Davidson, I. (1991). “The Evolutionary Emergence of Modern Human Behaviour: Language and its Archaeology.” Man, 26(2), 223-253.

[5] Deacon, T. W. (1997). The Symbolic Species: The Co-evolution of Language and the Brain, New York: Norton, 43 – italics in the original.

[6] Nowak, M. A., Plotkin, J. B., and Jansen, V. A. A. (2000). “The evolution of syntactic communication.” Nature, 404(30 March 2000), 495-498.

[7] Nowak, ibid.

[8] Fauconnier, G., and Turner, M. (2008). “The origin of language as a product of the evolution of double-scope blending.” Behavioral and Brain Sciences, 31(5 (2008)), 520-521.

[9] Fauconnier & Turner, ibid.

[10] Liszkowski, U., Schafer, M., Carpenter, M., and Tomasello, M. (2009). “Prelinguistic Infants, but Not Chimpanzees, Communicate About Absent Entities.” Psychological Science, 20(5:17 April 2009), 654-660.

[11] Hauser, M. D., Chomsky, N., and Fitch, W. T. (2002). “The Faculty of Language: What Is It, Who Has It, and How Did It Evolve?” Science, 298(22 November 2002), 1569-1579. Without denying the immense importance of recursion, I would note that their view that “no other animal” possesses it is still open to debate. There are possibilities of some kind of recursion in birdsong and in elephant, dolphin and whale communication, which has been described by Marler (1998) as “phonological syntax” in “Animal communication and human language” in: The Origin and Diversification of Language, eds., G. Jablonski & L. C. Aiello. California Academy of Sciences.